Google Quantum AI has published new benchmark results for its Willow quantum processor demonstrating below-threshold quantum error correction — a result that many in the field consider the most important milestone on the path to practical fault-tolerant quantum computing.

The results, published in Nature, show that as the team scaled their surface code from a 3×3 grid of logical qubits to a 7×7 grid, the logical error rate decreased exponentially — precisely the behaviour predicted by quantum error correction theory, and the first time it has been demonstrated experimentally at this scale.

What "Below Threshold" Means

Quantum error correction works by encoding a single logical qubit across many physical qubits, using redundancy to detect and correct errors without measuring the qubit state directly. The critical question is whether the overhead of error correction — which itself introduces errors — can be made to decrease as you add more physical qubits per logical qubit.

The threshold theorem states that if the physical error rate is below a certain threshold, adding more physical qubits per logical qubit will exponentially suppress the logical error rate. Above the threshold, adding more qubits makes things worse. Demonstrating below-threshold operation is therefore the key experimental proof that a quantum error correction approach is viable.

"This is the result we've been working toward for a decade. Demonstrating below-threshold error correction at scale means the path to fault-tolerant quantum computing is now a matter of engineering, not physics."

— Hartmut Neven, VP Engineering, Google Quantum AI

Willow's Architecture

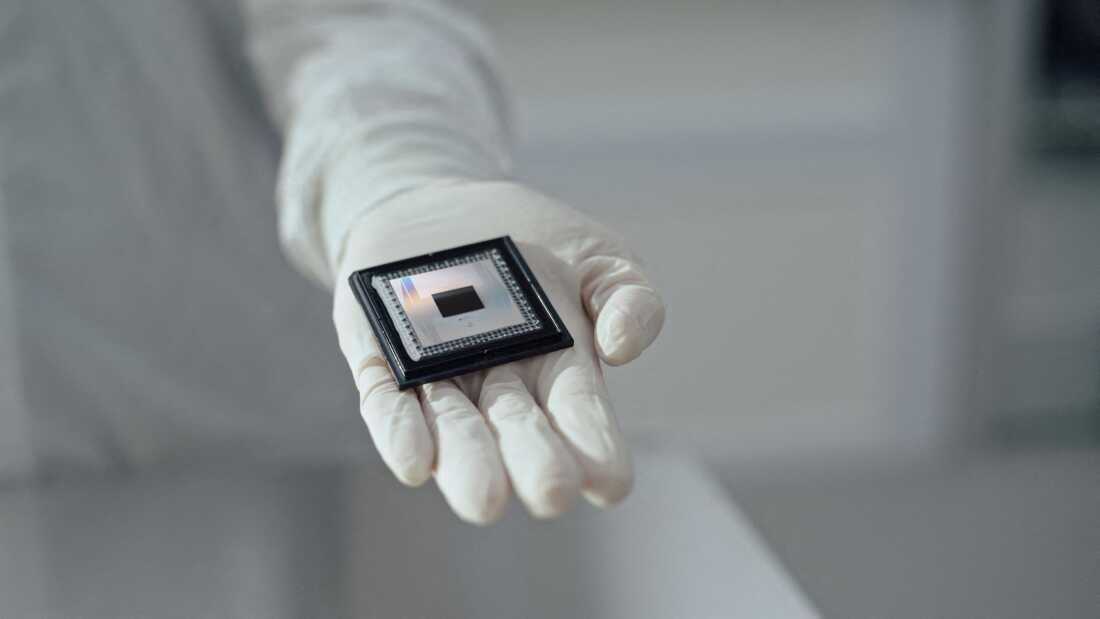

Willow is a 105-qubit superconducting processor fabricated using Google's latest generation of transmon qubits. The chip features median single-qubit gate fidelity of 99.90% and two-qubit gate fidelity of 99.70% — improvements of approximately 0.1 percentage points over the previous Sycamore generation, but critical at the margins where error correction operates.

The processor also demonstrated a random circuit sampling (RCS) benchmark that would require a classical supercomputer an estimated 10²⁵ years to simulate — a result Google uses to claim quantum computational advantage, though the practical utility of RCS remains debated.

The Road to Fault Tolerance

Below-threshold operation is necessary but not sufficient for practical fault-tolerant quantum computing. The next challenge is reducing the overhead — the ratio of physical to logical qubits required to achieve a given logical error rate. Current estimates suggest that running a practically useful quantum algorithm with fault tolerance will require millions of physical qubits.

Google has outlined a roadmap to a "useful quantum computer" by 2029, contingent on continued improvements in qubit fidelity, connectivity, and fabrication yield. The Willow result provides strong evidence that the roadmap is on track.

Competitive Context

The result puts Google ahead of IBM and Microsoft in the race to demonstrate fault-tolerant quantum computing, though all three companies are pursuing different architectural approaches. IBM's heavy-hex lattice and Microsoft's topological qubit approach each have potential advantages at scale that may not be apparent at current qubit counts.